Building code software is evolving fast, and the next winners won’t be libraries. They’ll be reasoning engines.

If you’ve been in AEC long enough, you’ve lived through the same ritual a thousand times.

Someone asks a code question in the middle of a design conversation. Everyone pauses. A few people open PDFs. Someone searches a digital code library. Someone else messages the in-house code expert. And then, after 20 minutes of searching, cross-checking, and mild arguing, you get to a conclusion that sounds like:

“I think this is right. But let’s verify.”

That sentence is basically the unofficial motto of code compliance.

The reason is simple. Building codes are not a lookup problem. They are a reasoning problem.

And that is why code tools are about to go through a shift that looks a lot like the internet did.

The internet didn’t get better because we had more websites

It got better because we had better interpretation.

In the early days of the web, finding information was hard. Not because the information didn’t exist, but because it wasn’t navigable. So the first big wave of products focused on search.

Yahoo was a directory. AltaVista was a search engine. Then Google came along and made search feel almost magical.

But here’s the important part.

Google didn’t win because it had more pages. Everyone had the same web.

Google won because it was better at ranking, context, and intent. It interpreted the web.

That is exactly what is happening right now in building code software.

The first era: codebooks, PDFs, and tribal knowledge

For decades, the code workflow was manual.

Codebooks. PDFs. Notes in margins. Internal templates. Seniors who had the logic memorized. Consultants for the scary edge cases.

This era worked, but it came with costs:

- juniors spend hours searching and still miss exceptions

- seniors become bottlenecks

- knowledge leaves when people leave

- interpretations are inconsistent across teams

- and worst of all, the same research gets repeated every project

But it was still the best option. Because there wasn’t an alternative.

The second era: digital code libraries (the UpCodes era)

Then digital code libraries arrived, and for many firms it was a real upgrade.

Instead of PDFs, you had:

- online code access

- structured navigation

- fast search

- cross-linked references

- cleaner reading experience

UpCodes deserves a lot of credit here. It’s arguably best-in-class as a modern online code library. For everyday code lookup, it made the experience dramatically better.

This was the “Google Search” moment for building codes.

But it still didn’t solve the core problem.

Because even the best code library is still a library.

It helps you find text faster. It doesn’t turn text into decisions.

Why “finding the section” is not the same as “knowing what applies”

If you’ve ever trained a junior on code research, you’ve seen this firsthand.

They can find the right section.

And still get the answer wrong.

Because codes are full of traps that are not traps, they’re design.

Definitions change meaning. Exceptions override rules. Cross references require jumping across chapters. Local amendments flip triggers. Requirements depend on project facts. And a lot of compliance is conditional logic disguised as paragraphs.

Here are three common examples that show why search isn’t enough.

1) The exception chain problem

A requirement is stated clearly. Then an exception modifies it. Then another exception modifies the exception. Then a local amendment modifies the trigger.

Search gets you the base requirement. Reasoning gets you the governing condition.

2) The multi-code dependency problem

An answer might require:

- IBC for core requirements

- IFC for operational constraints

- NFPA standards for referenced conditions

- local amendments for adoption differences

A tool can retrieve IBC language and still miss the actual controlling logic.

3) The calculation and assumption problem

Occupant load. Plumbing fixtures. Egress widths. Fire areas. Travel distances.

These are not lookup tasks. They require computation and assumptions. If the assumptions aren’t explicit, the answer can’t be trusted.

This is where most AI tools fail too. They can generate language. They can’t consistently reason.

The third era: AI code tools (and the trust crisis)

When foundational AI models became widely available, the AEC industry did what it always does.

It tried them immediately.

Architects and engineers asked code questions and got answers in seconds. For a moment, it felt like the future had arrived.

Then reality hit.

A lot of those answers were wrong. Or incomplete. Or right in a generic sense but wrong in a project-specific sense.

And because the answers sounded confident, the experience was worse than slow research. It felt risky.

That’s when the industry developed a new instinct:

Don’t trust AI for code.

This is the trust crisis in AEC AI.

And it wasn’t irrational skepticism. It was learned behavior.

What’s changing now: the shift from AI answers to AI reasoning

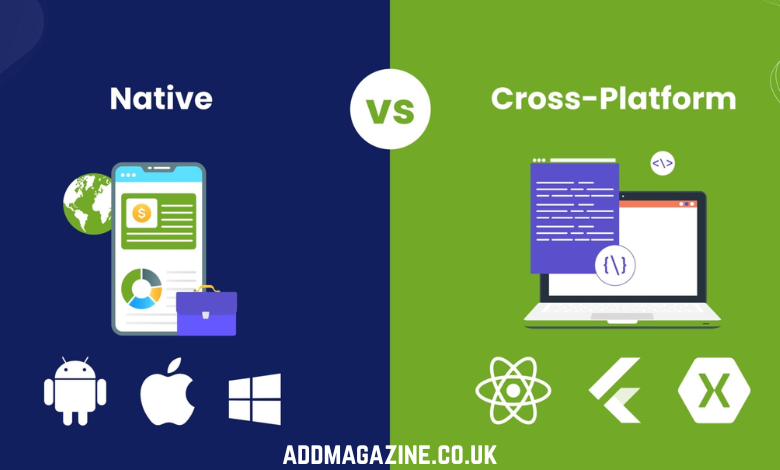

The market is now splitting into two kinds of products.

Lane 1: AI layered on top of search

This is useful. It speeds up reading. It summarizes sections. It helps navigate.

But it still breaks down in complex scenarios.

Lane 2: AI built as a code reasoning system

This is the more important shift.

Instead of treating codes as text, it treats codes as logic.

Instead of producing an answer, it produces a defensible conclusion.

And most importantly, it shows how it got there.

This is where code tools are about to have their “Google moment” again.

Not in search.

In reasoning.

Why MeltPlan’s Melt Code feels like the next layer

MeltPlan’s Melt Code is a strong example of this new lane.

What makes it different is not that it uses AI. Everyone uses AI now.

It’s that Melt Code is built around a specific idea:

Compliance breaks at reasoning, not search.

So the system is designed to behave like a code expert:

- use project context (occupancy, construction type, sprinkler status, etc.)

- follow definitions and triggers

- evaluate exceptions

- connect multiple documents

- apply jurisdiction logic and amendments

- show reasoning paths so humans can verify and defend decisions

That “show your work” layer changes everything.

Because in AEC, trust doesn’t come from confidence. It comes from auditability.

The bigger implication: code compliance is becoming computable

This is the real story underneath the product wave.

For decades, compliance was a human-only activity because it required interpretation. That meant it was expensive, slow, inconsistent, and dependent on a small number of experts.

If code reasoning becomes computable, even partially, it changes the structure of firms.

Juniors move faster with less risk.

Seniors become reviewers, not bottlenecks.

Knowledge becomes reusable.

Compliance becomes a workflow, not a fire drill.

And permitting becomes less chaotic because decisions are documented, cited, and consistent.

Search was the first revolution. Reasoning is the next one.

Digital libraries were the first big wave, and they were necessary. They made code accessible and searchable.

But search is not the finish line. It’s the starting point.

The next winners won’t be the tools that help you find the section faster.

They’ll be the tools that help you answer the real question:

“What does the code actually require here, and how do we defend it?”

That is the shift from search to reasoning.

And it’s why building code software is about to have its next major platform moment.